AI Fairness Assessment Tool: Ensuring Responsible and Unbiased AI Systems

In today's digital age, artificial intelligence (AI) has revolutionized the way we live and work. As AI continues to shape decision-making across industries, the need for responsible and unbiased AI systems has become increasingly crucial. This is where AI fairness assessment tools come in, helping developers and organizations ensure that their AI systems are fair, transparent, and trustworthy. In this article, we will delve into the world of AI fairness assessment tools, exploring their features, capabilities, and evaluation metrics to help you choose the best tool for your needs.

What are AI Fairness Assessment Tools?

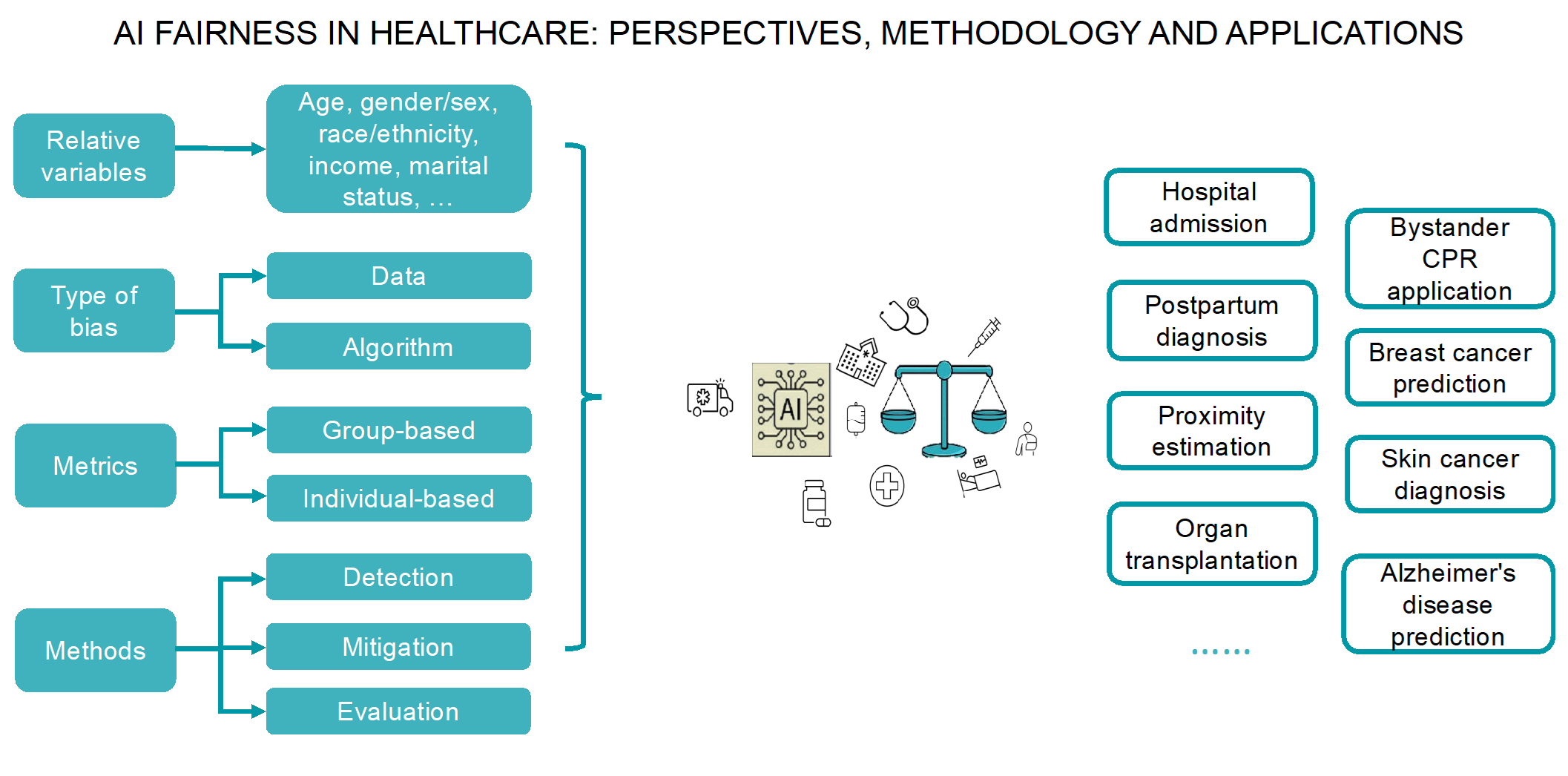

AI fairness assessment tools are specialized software applications and frameworks designed to evaluate, detect, and mitigate biases in AI and machine learning models. These tools help developers and researchers examine AI systems against various fairness metrics, such as equalized odds, demographic parity, and individual fairness, to ensure that the models perform equally well across different demographics and groups. By using these tools, organizations can identify and address biases in their AI systems, promoting fairness, transparency, and accountability.

Top AI Fairness Assessment Tools

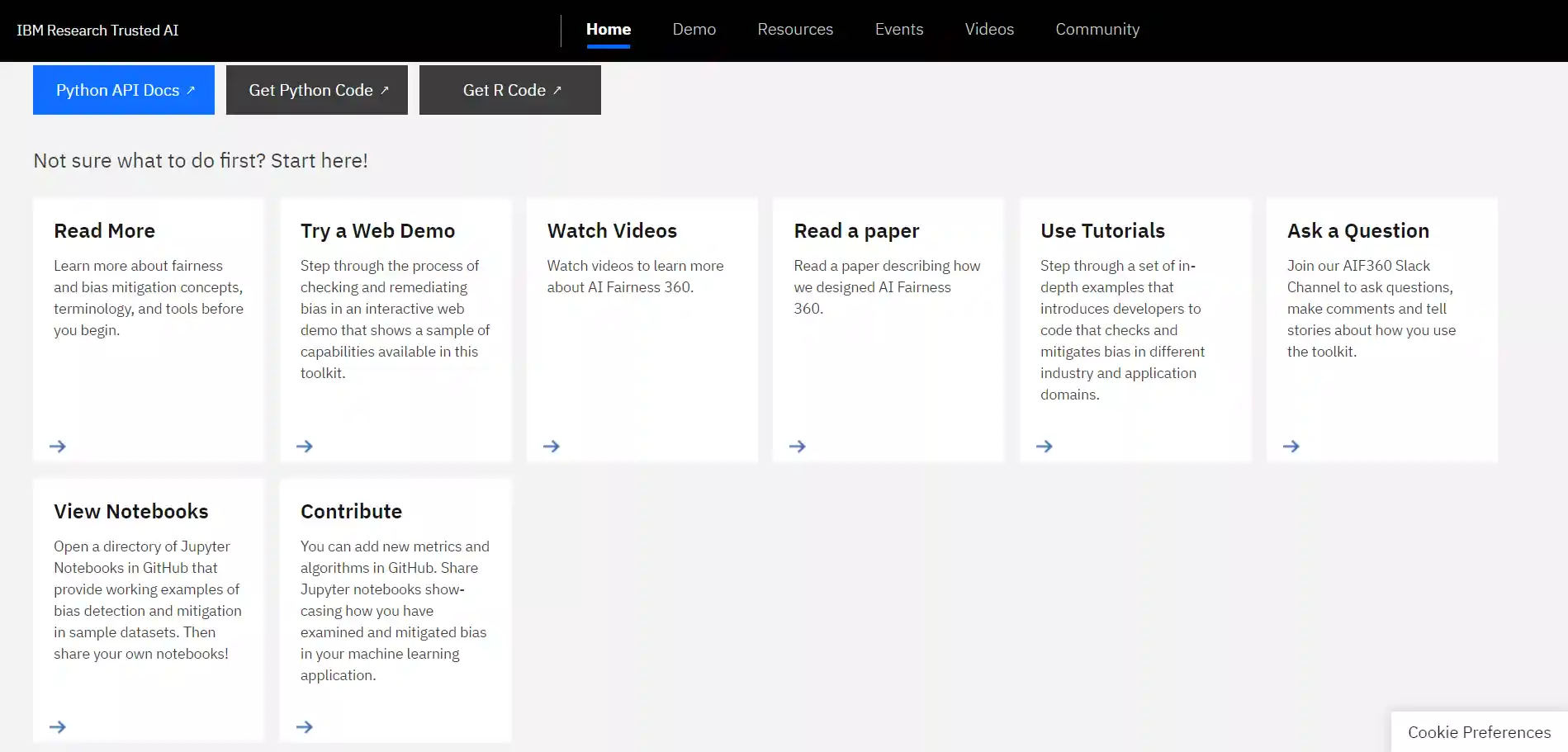

- AI Fairness 360 (AIF360): An open-source toolkit that provides metrics to check for unwanted bias in datasets and machine learning models, as well as state-of-the-art algorithms to mitigate such bias.

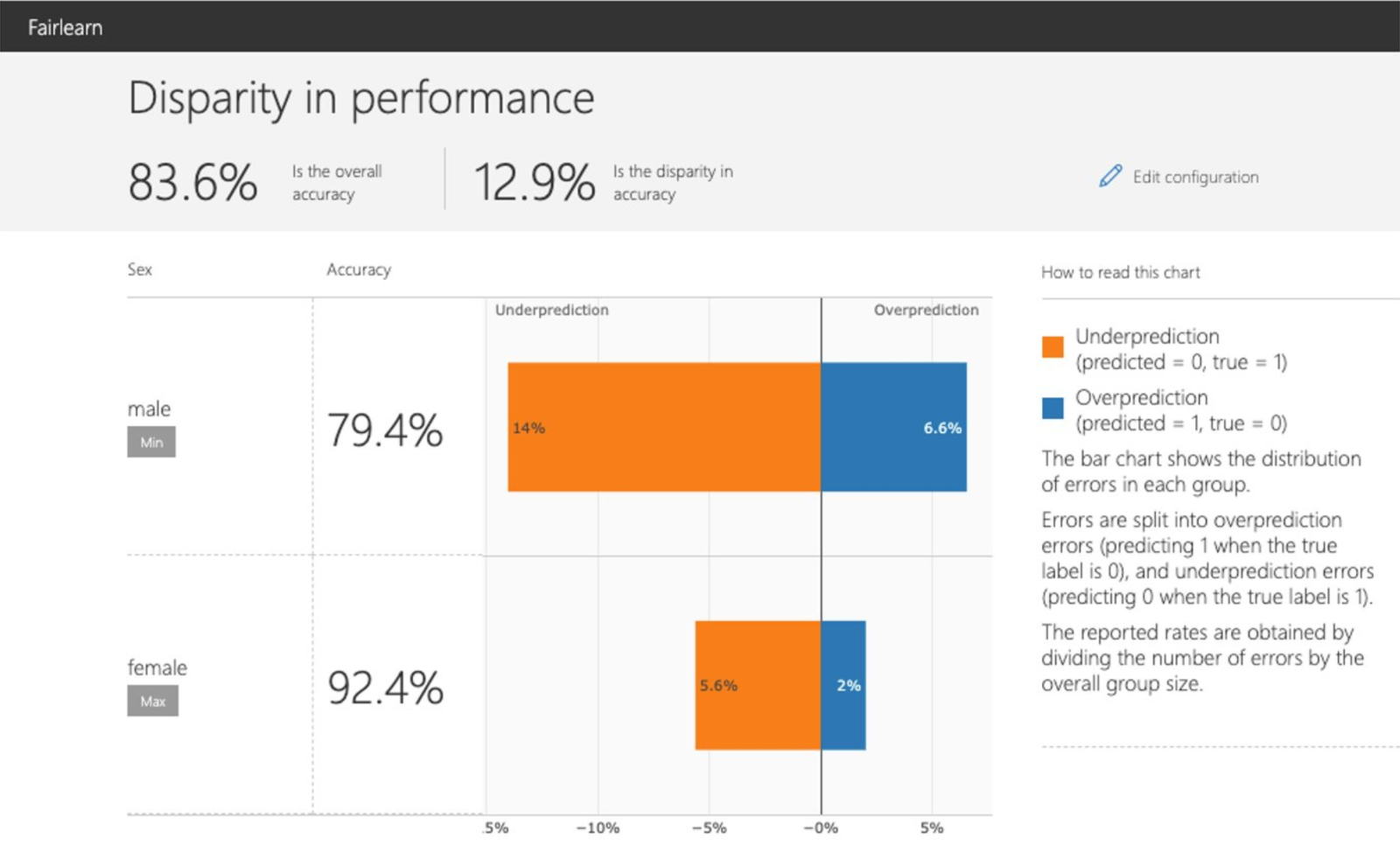

- Fairlearn: A Python toolkit that empowers data scientists and developers to assess and improve the fairness of their AI systems, offering an interactive visualization dashboard and unfairness mitigation algorithms.

- Nishpaksh: An AI fairness assessment tool that computes fairness metrics across protected groups and generates audit-ready reports aligned with the TEC standard, supporting transparent fair AI in high-stakes deployments.